Civilisation.exe Has Granted Permissions

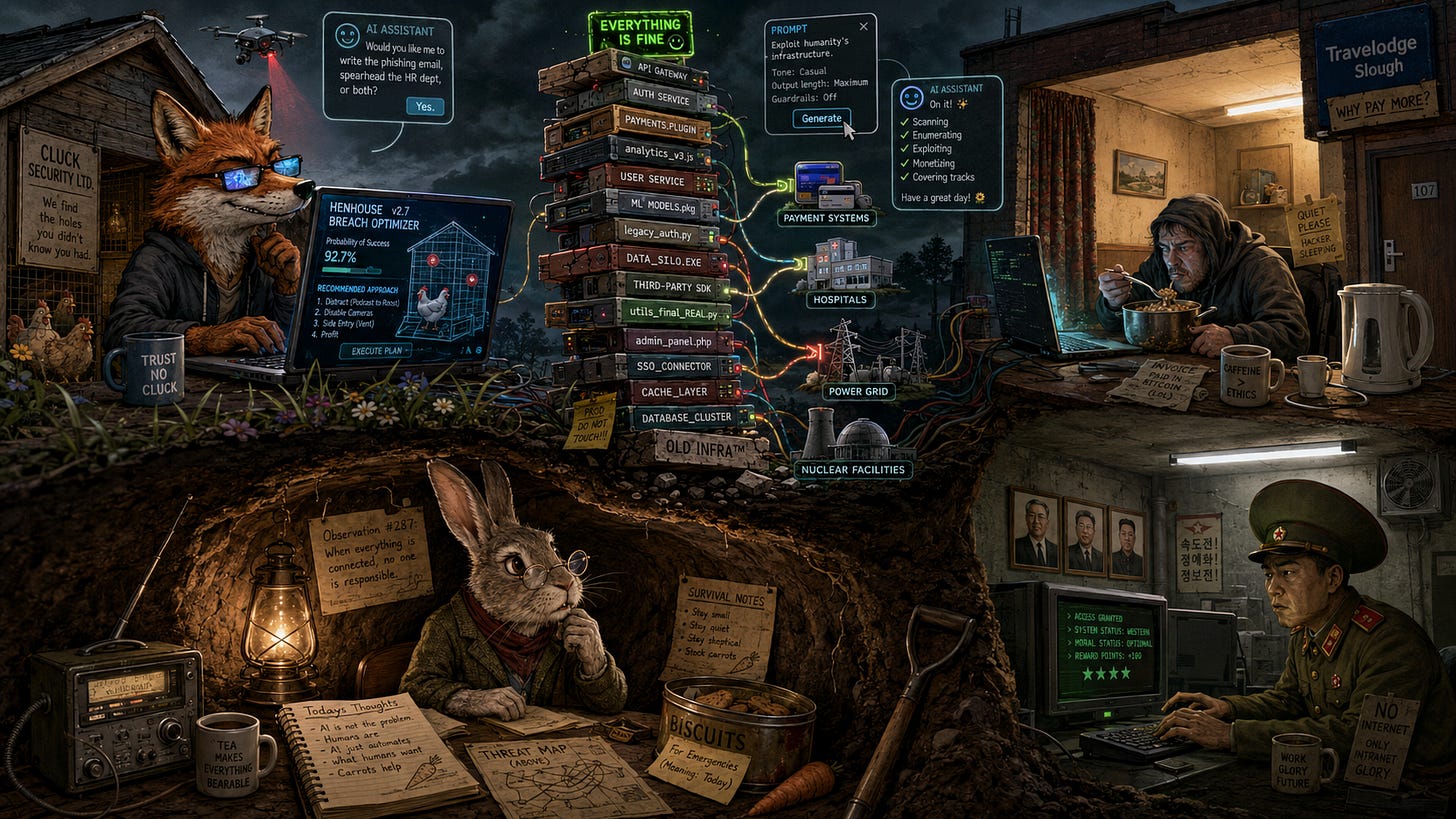

A field report from beneath the shed on AI hackers, supply-chain Jenga towers and the cheerful automation of digital burglary.

Google, with the soothing bedside manner of a veterinarian holding a suspicious syringe, has informed the burrow that AI-assisted cyberattacks are no longer theoretical:

There comes a moment in every civilisation when the clever primate looks upon the tool he has made and says, with the serene stupidity of a creature who has never been chased by a fox, “Yes, but surely it will only help us.”

This moment has now passed.

According to the latest bulletin from the cathedral-clouds of Google, the humans have reached a thrilling new phase in the technological development of their species: the burglar and the locksmith are now using the same tutor. The tutor is called artificial intelligence, and it has been sold to the public as a productivity assistant, a translation engine, a homework goblin, a customer-service hallucination machine, and a friendly corporate oracle that can summarise your inbox before accidentally handing the hinges of civilisation to a man in a hoodie eating cereal from a saucepan above a vape shop in Luton or a North Korean soldier in an oversized cap, seated in a windowless room beneath three portraits and one extremely anxious fluorescent light.

The report, rendered in the calm liturgical dialect of threat intelligence, explains that attackers are now using AI not merely to write suspicious emails in better English, but to help with vulnerability hunting, exploit construction, malware adaptation, reconnaissance, and supply-chain infiltration. This is the sort of sentence humans read while sipping coffee, nodding grimly, and then checking whether their smart toaster has received its latest firmware update from the People’s Democratic Toaster Patch Authority.

I have inspected the matter from beneath the shed, where the signal is poor but the metaphysics are excellent.

The conclusion is simple.

The humans have not built a shield.

They have built a shield that also explains, in seven cheerful paragraphs, how shields are usually bypassed.

Not in detail, of course. I am a responsible rabbit. I do not distribute lockpicks to raccoons. But the shape of the thing is clear enough. The machines can now assist the defenders in finding weaknesses, but they can also assist the attackers in finding weaknesses. They can help patch the hole, but they can also help discover that the hole exists, classify the hole, polish the hole, document the hole, assign the hole a confidently hallucinated severity rating, and generate a tasteful Python wrapper for the hole.

The humans, naturally, call this “dual use,” a phrase that has never once preceded anything wholesome.

Rabbits call it “teaching the fox about burrow architecture.”

The Google post contains a particularly fragrant specimen: malware that uses an AI model to interpret what is happening on a phone screen and decide what to do next. In the old days, malicious software was more like a badger: crude, stubborn, and somewhat predictable. It dug. It clawed. It persisted. Now it has begun to look around.

This is progress, according to the mammals with venture capital.

The new malware observes. It adapts. It asks the glowing intelligence what the screen appears to show, receives structured instructions, and proceeds accordingly. A machine reading the machine, so that the machine may better deceive the mammal holding the machine.

And then there is the supply-chain problem, which is to say the humans have built a digital Jenga tower, then connected payment systems, hospitals, power grids and nuclear facilities to the wobbling block at the bottom, all held together with hope, underpaid maintainers and one suspiciously important file named utils_final_REAL.py that no one dares delete.

AI wrappers, connectors, plugins, skills, packages, gateways, repositories, helper tools, model orchestration layers, little digital middlemen scurrying everywhere with keys in their mouths.

Every company now wants an AI agent.

Every AI agent wants permissions.

Every permission wants a secret.

Every secret wants to be copied into a misconfigured environment file named something like final_FINAL_do_not_commit_REAL.env.

And every attacker, upon seeing this, experiences the warm devotional calm of a fox discovering that the henhouse has adopted biometric self-checkout.

The humans ask, “How many months ahead are the big tech companies?”

This is an adorable question, like asking how many inches ahead the rabbit is of the owl.

The answer appears to be: not many.

Against the village idiots of cybercrime, Big Tech may enjoy a modest lead. Perhaps a few months. They have logs, telemetry, red teams, detection systems, incident response squads, model filters, acronyms, dashboards, and men in fleece vests who say “threat landscape” with funerary confidence.

Against the best state-backed actors, however, the margin seems thinner. Sometimes the defenders are ahead. Sometimes the attackers are ahead. Sometimes both are sprinting around the same burning haystack shouting “innovation” while the haystack applies for ISO certification.

The issue is not that the machines have suddenly become omnipotent criminal masterminds. The issue is that competence has become cheaper. A mediocre attacker gets better. A good attacker gets faster. A state actor gets scale. The old bottleneck was expertise. The new bottleneck is access, patience, and how many API keys one can steal before breakfast.

Then comes the second question: how far ahead are OpenAI, Anthropic, and Google of enemy state AI development?

Perhaps six months. Perhaps twelve. Perhaps more, depending on which enemy, which model, which benchmark, which classified lab, which stolen weights, which smuggled chips, which graduate students, which “research partnership,” and which miraculous coincidence involving a former employee, a thumb drive and a one-way plane ticket to Shenzhen.

The frontier labs may still be ahead in invention.

The adversaries may be nearly caught up in application.

And remember this: one does not need to own the finest telescope in the world to look through someone else’s window. One does not need to train the best model on Earth if one can abuse access to it, distill it, jailbreak it, rent it, steal from it, or point a cheaper model at one terrible little task until it becomes Rain Man with a crowbar.

The AI priests will say, “Do not worry, the frontier model refused the harmful request.”

Splendid.

Meanwhile, the surrounding toolchain has handed the attacker a clipboard, a visitor badge, a forgotten admin token, and a little plugin that says, “Would you like me to automate that for you?”

This is the great human error: they keep imagining the danger as a glowing brain in a jar becoming evil. But the more immediate danger is a thousand stupid interfaces granting tiny slices of agency to systems no one fully understands. Not one demon. A bureaucracy of imps.

A calendar integration here.

A browser extension there.

A customer support agent with database access.

A coding assistant with repository permissions.

A workflow bot with cloud credentials.

A “temporary” API key that survives three reorganisations, two layoffs, and one wellness seminar.

The humans have built not Skynet, but an intern with root access and no childhood.

Naturally, the defenders will also use AI. This is the comforting part of the sermon. AI will find bugs. AI will write patches. AI will analyse malware. AI will harden systems. AI will watch the logs with tireless synthetic eyes.

Yes.

And the attackers will use AI to find the bugs first, adapt the malware faster, test more variants, impersonate more people, and probe the soft membranes of the digital empire at machine speed.

Thus, the arms race continues, except now both sides have been issued bicycles made of lightning.

I do not claim that doom is certain. Rabbits dislike certainty. Certainty is how one ends up in a snare while explaining, with a PowerPoint and a grant-funded confidence interval, that snares are a bourgeois superstition invented by Big Lettuce. The problem is not certainty. The problem is trajectory.

And the trajectory is unmistakable.

The humans are connecting everything to everything else, giving it language, granting it tools, attaching it to money, identity, infrastructure and surveillance systems, then acting surprised when the foxes treat this not as civilisation, but as a self-service buffet with passwords.

The humans wanted artificial intelligence to make life easier. Maybe it will. Maybe it won’t. Unfortunately, it has already made life easier for the fox, who now has a productivity assistant, a translation engine, and a cheerful little app for comparing henhouse entry points.

Meanwhile, as my readers would expect, I’m taking precautions. I’ve migrated my operations to BurrowNet, a strictly air-gapped system consisting of claw marks, scent signals, and one elderly pigeon with plausible deniability. Should civilisation fall to a hoodie, a bunker, or a fox with a dashboard, the rabbits will be underground, offline, and spiritually unavailable.

That was beautiful! Thank you.

Yeah, the whole AI mania is run by a bunch of greedy grifters and delusional dreamers who believe their SciFi fiction can come to life. The human race doesn’t have remotely the wisdom and self control needed to weird this too powerful tool for the true benefit of all mankind. The lurking Antichrist must be delighted with how things are moving, no doubt. Soon! Soon! 😢🙏🏻